In large-scale LLM development, improving model quality depends not only on data quantity but also on data quality and specificity. While pretraining datasets often contain a vast range of information, they can lack the conceptual targeting needed to strengthen particular skills, such as reasoning or programming proficiency. To address this challenge, we designed an approach for scalable, concept-driven synthetic data generation — a workflow that enables researchers to generate data aligned with desired model capabilities. As an initial application, we construct a pretraining-scale synthetic dataset consisting of 15 million Python programming problems, released as the Nemotron-Pretraining-Code-Concepts subset of the Nemotron-Pretraining-Specialized-v1.1 dataset. We show that including these data in the final 100 billion tokens of Nemotron-Nano-v3 pretraining yields a six-point gain on the HumanEval benchmark.

Our workflow centers on a curated taxonomy of programming knowledge derived from large-scale annotation of the Nemotron‑Pretraining‑Code‑{v1,v2} datasets. This taxonomy encodes thousands of programming concepts organized hierarchically, from fundamental constructs (e.g., strings, recursion) to advanced algorithmic and data-structure patterns. Using this taxonomy, developers can perform targeted data generation through the combination and distillation of selected concepts, enabling experimenters to control difficulty, diversity, and conceptual balance across generated data.

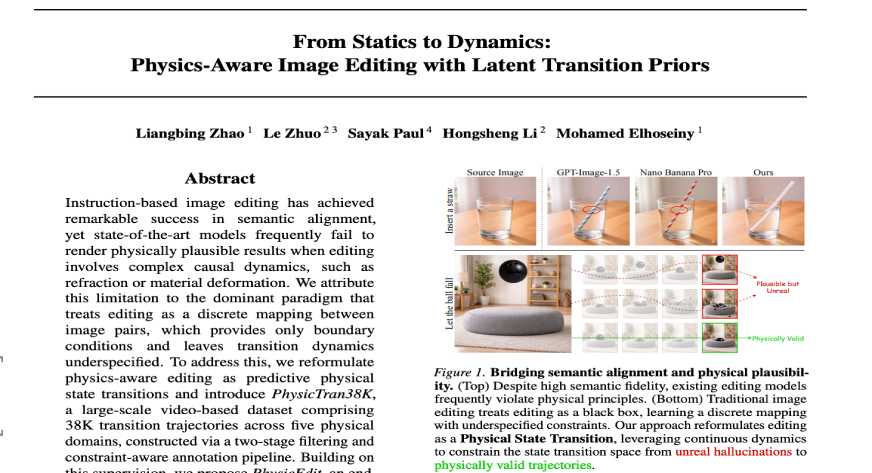

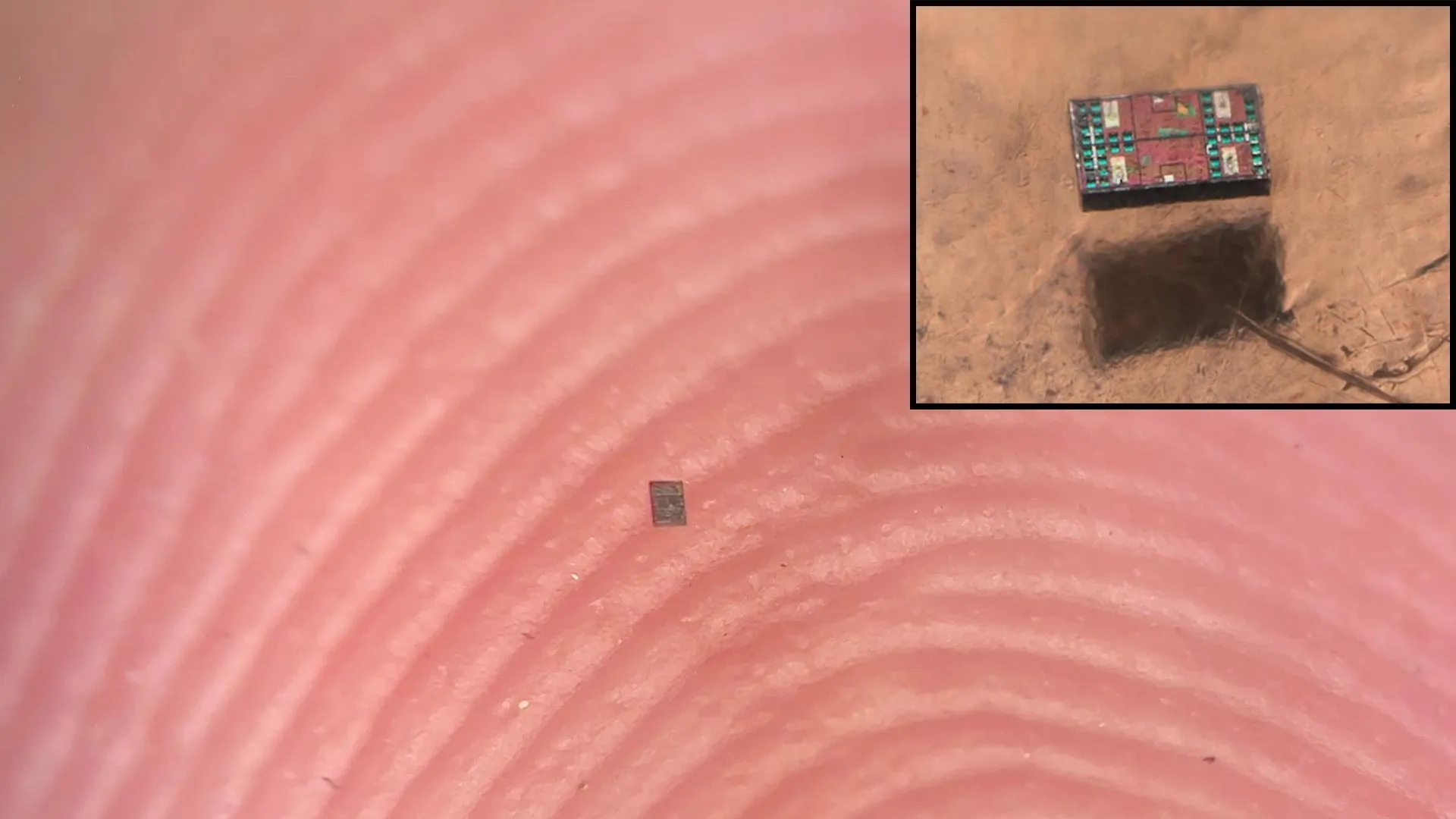

To evaluate this workflow in practice, we applied it to create a large-scale synthetic dataset aimed at enhancing foundational Python programming skills in LLM pretraining. We first identified 91 core concepts most relevant to the HumanEval benchmark (though still broadly representative of real programming knowledge) by classifying its code-completion prompts within our taxonomy. Guided by combinations of these concepts, we generated approximately 15 million synthetic Python programming problems, each of which was validated to consist of working Python code (using Python’s ast.parse function). Figure 1 provides a visual summary of the application of our workflow to synthetize the Code Concepts dataset and Figure 2 provides a visualization of the problem generation process and an example of a concept seed and synthetic problem pair.

Figure 1: Concept-driven data generation used to generate the Code Concepts dataset. Using a taxonomy constructed from the Nemotron-Pretraining-Code-{v1,v2} datasets, we extract programming concepts from HumanEval prompts and use those for open-ended generation. Our workflow resulted in ~15M Python programming problems derived from 91 different programming concepts.

Figure 2: A visual representation of Python programming problem generation as part of our concept-driven data-generation workflow. A prompt is constructed from a combination of concepts (included in the blue box and represented using dot-notation), an instruction and some constraints. Using GPT-OSS 120B a problem is generated, then parsed and filtered for quality. For this particular example, the combination of the concepts data-structures.sets.operation, algorithms.arrays.processing and algorithms.geometry.computational contributed to a problem involving counting distinct convex‑hull areas from all sufficiently large subsets of a list of points.

To validate these generated data, we included 10 billion tokens of the Code Concepts dataset into the final 100 billion tokens of Nemotron Nano‑v3 pretraining. After training and evaluation, we find that the resulting model yields a six‑point improvement in HumanEval accuracy, from 73 to 79. Figure 3 shows a comparison of base-model evaluations between Nemotron-Nano-v3 and Nemotron-Nano-v3 trained with the Code Concepts dataset. Beyond quantitative gains, qualitative assessment reveals stronger performance across varied programming concepts (e.g., graph algorithms, set operations) and improved handling of edge cases and execution reasoning.

We view this dataset as a validation of the broader concept-driven generation workflow rather than a one-off artifact. By releasing both the dataset and the underlying taxonomy under a permissive open license (CC‑BY‑4.0), we hope to enable the community to extend this method to other domains and use cases in scalable, targeted LLM pretraining.

Figure 3: Base-model benchmark evaluation results obtained after performing a 100 billion token data-ablation experiment using ~10 billion tokens of the Code Concepts data. The model trained on the Code Concepts data achieves a six-point gain on HumanEval and most other benchmarks are unchanged.