Last Updated on March 11, 2026 by Editorial Team

Author(s): DrSwarnenduAI

Originally published on Towards AI.

Nobody Invented Attention. A Frustrated PhD Student Ran Out of Other Options.

Dzmitry Bahdanau was not trying to invent the architecture that would eventually run inside every large language model on earth.

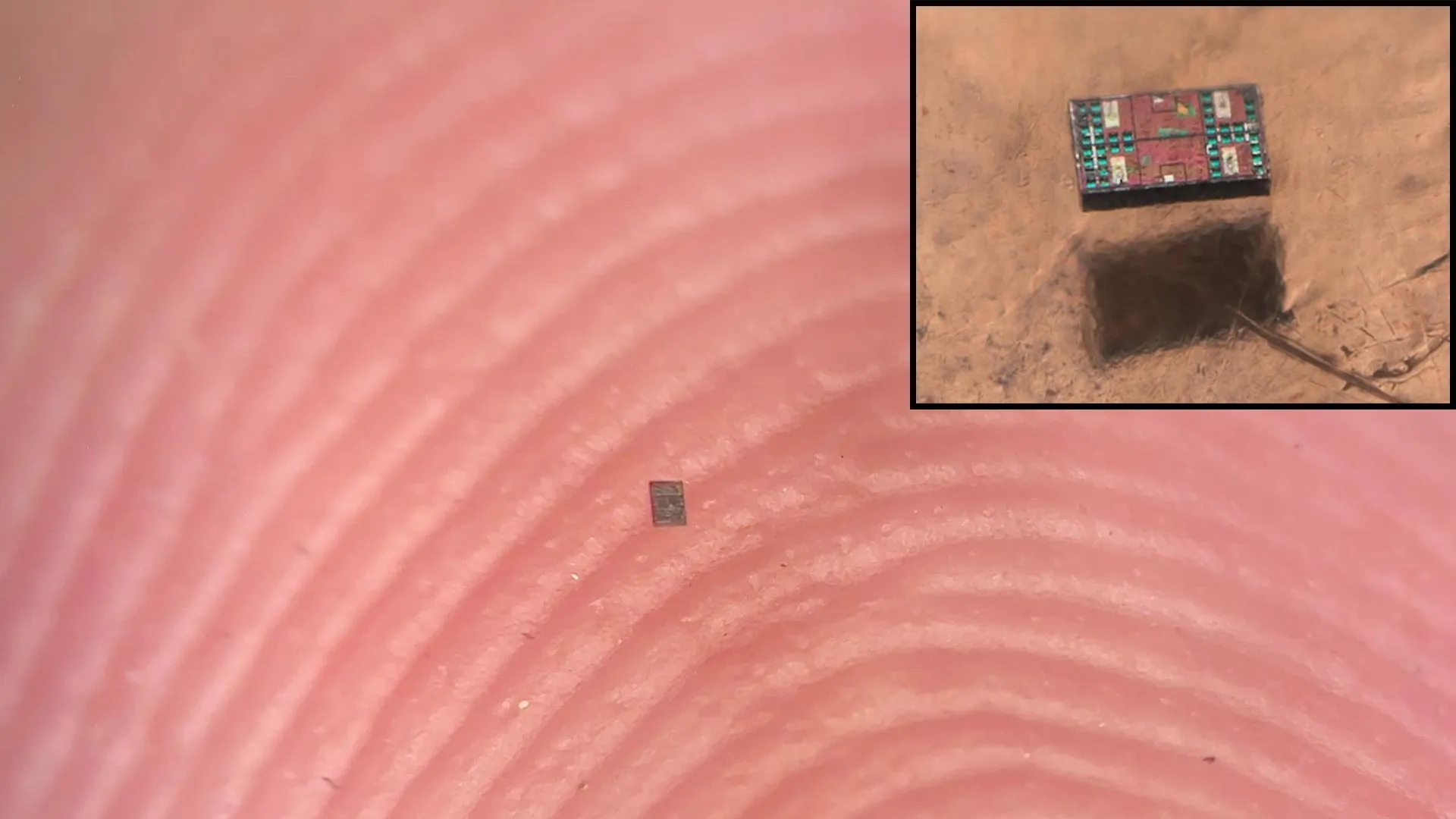

The article discusses the journey of Dzmitry Bahdanau, who, while trying to improve long sentence translations with neural networks, faced challenges due to the limitations of encoding long-range dependencies. It explores the mathematical constraints and problems associated with traditional RNN architectures, leading to the development of the attention mechanism, which redefined how models handle information, allowing for better management of memory in translation tasks, ultimately emphasizing that the main innovation came from addressing practical questions in machine translation rather than mere theoretical constructs.

Read the full blog for free on Medium.

Published via Towards AI

We Build Enterprise-Grade AI. We’ll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.