Author(s): Krishnan Srinivasan

Originally published on Towards AI.

(A practical, end-to-end guide to building a ground-truth-based evaluation pipeline, complete with synthetic data and runnable SQL on Snowflake)

In the earlier parts of this Agentic AI series, we explored how AI systems can reason, use tools, retrieve knowledge, and orchestrate complex workflows. But as AI systems become more capable and autonomous, an equally important question starts to take center stage.

How do we evaluate whether the AI actually performed correctly?

Whether the task is handled by a single model, an AI pipeline, or a multi-agent workflow, the outcome still needs to be measured against something objective. In other words, capability without evaluation is incomplete.

Imagine you have built an AI pipeline that reads supplier invoices and pulls out three key fields: 📄 Invoice ID, 💰 Total Amount, 🏢 Supplier Name. The extraction runs. The data lands in your database. But now comes the hard question:

How do you know if what was extracted is actually correct?

Manually checking thousands of documents does not scale. Rule-based validation is brittle. Simple string comparisons fail when formatting differences appear.

This is where LLM-as-a-Judge comes in ⚖️

Instead of writing fragile validation logic or manually auditing records, we can use a language model to act as an evaluator. The model compares what the AI pipeline extracted against ground truth (human-verified values) and produces a structured evaluation with:

- an accuracy score

- a match classification

- a short explanation for the decision

What is LLM-as-a-Judge?

LLM-as-a-Judge is an evaluation pattern where you use a large language model not to do the primary task, but to grade the output of another model (or pipeline) doing the primary task.

It has become popular in production AI systems because:

It scales: you can evaluate thousands of records without a human reviewer for every one.

It is flexible: it can handle fuzzy matches, formatting differences, and partial answers that a simple string comparison would flag as wrong.

It is auditable: you get a score AND a human-readable explanation for every decision.

Without ground truth, LLM-as-a-Judge can only check plausibility. i.e whether the extracted value looks reasonable against the source document. With ground truth (known-correct values), it becomes a true accuracy measurement.

In this post, we walk through a complete end-to-end implementation: creating the evaluation tables, generating synthetic invoice data with varied extraction quality, building the LLM-as-a-Judge function in Snowflake Cortex, running the evaluation pipeline, and analysing the results.

The outcome is a closed-loop evaluation framework where AI outputs are continuously measured, monitored, and improved. This is an essential capability as Agentic AI systems become more deeply embedded in enterprise workflows.

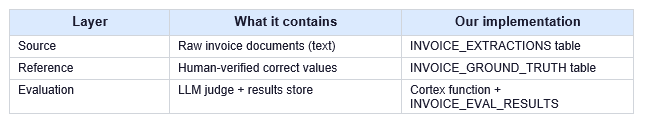

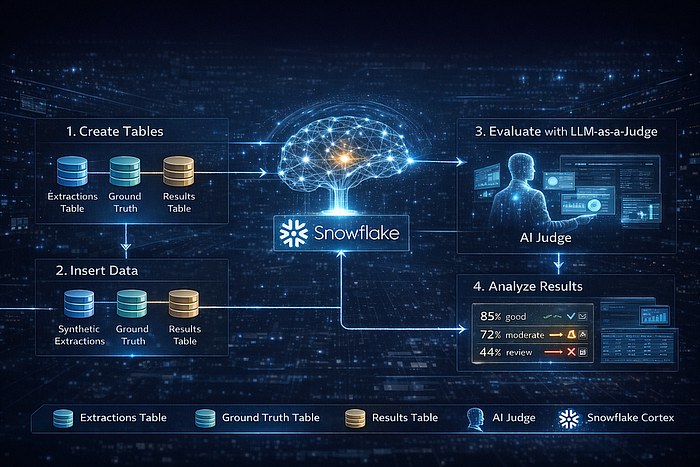

The full pipeline has three layers:

End-to-End LLM-as-a-Judge Evaluation Process

This diagram illustrates how AI-extracted invoice data is evaluated end-to-end using LLM-as-a-Judge inside Snowflake Cortex. AI-generated extractions and human-verified ground truth are stored as structured tables and fed into Cortex, where a deterministic LLM acts as an impartial evaluator. Each field is scored independently, producing explainable, auditable results that flow into analytics and dashboards. The outcome is a closed-loop, enterprise-grade evaluation pipeline that makes document AI accuracy measurable, actionable, and continuously improvable, entirely within Snowflake.

Implementation Steps

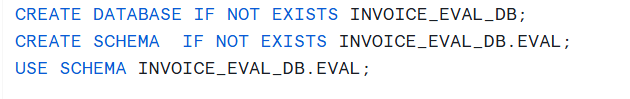

Initial Setup:

We will begin by creating a dedicated database and schema for this walkthrough.

We will now proceed with the implementation steps.

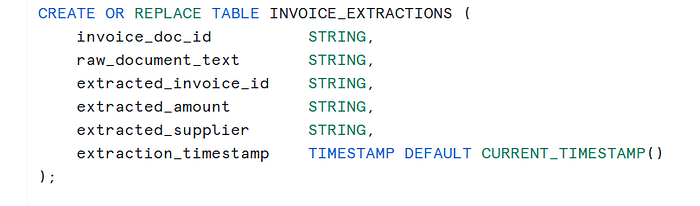

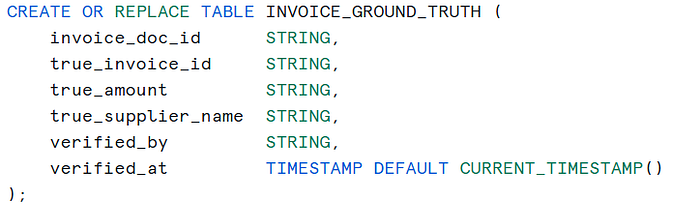

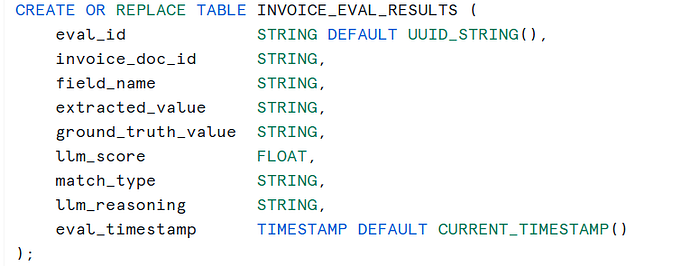

Step 1 — Create the Tables

We need three tables. The extractions table holds what your AI pipeline pulled from each document. The ground truth table holds the correct answers, verified by a human. The results table is where the judge writes its scores.

1a. Extractions Table: This is the output of your existing invoice extraction pipeline. For this tutorial, we will populate it with synthetic data that has a deliberate mix of correct, partially-correct, and wrong extractions.

1b. Ground Truth Table: This is your reference dataset containing the known-correct values. In a real project, a small team of reviewers annotates a representative sample. Even 50 to 100 verified invoices gives you a meaningful benchmark.

1c. Evaluation Results Table: The judge will write one row per field per invoice. Each row captures the extracted value, the ground truth, the score (0.0 to 1.0), a match type category, and a plain-English explanation.

Step 2: Insert Synthetic data for Ground Truth

Rather than waiting for a real batch of invoices, we will create 10 synthetic invoice documents with a deliberate variety of extraction outcomes. This lets you see the full range of judge scores in one run. For simplicity, let us assume we have already processed the invoices and have stored the data in a strctured format.

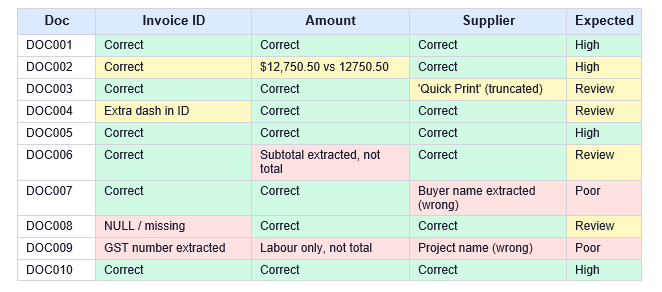

The table below summarizes the error patterns we have intentionally introduced:

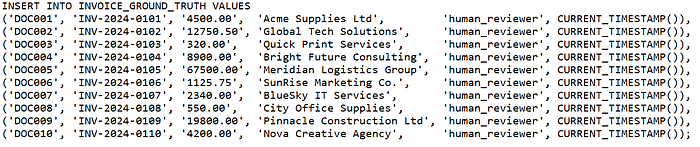

Ground Truth Insert: Insert the verified correct values. In production these come from your annotation tool or a manually curated spreadsheet.

Step 3: Extractions Insert

Let us now insert the AI-extracted values.

Note: In a real pipeline, these values would typically be extracted into structured tables using Document AI capabilities such as AI_EXTRACT. For this walkthrough, we assume the extraction step has already been completed and the results are available in a structured table. The focus of this article is on LLM-as-a-Judge evaluation, not the extraction mechanics. If you would like to understand how document fields can be extracted from invoices using Snowflake Cortex, please refer to my earlier blog on AI_EXTRACT, where the extraction workflow is explained in detail.

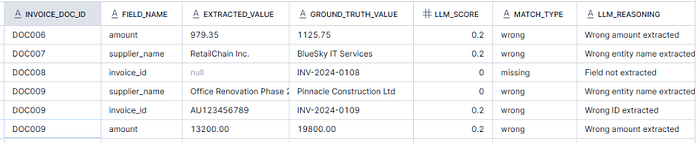

For the purpose of this walkthrough, a few extraction errors have been intentionally introduced in order to verify how the LLM-as-a-Judge evaluation behaves across different scenarios. Notice how some documents have subtle bugs: DOC006 grabs the pre-tax subtotal instead of the total, DOC007 confuses the buyer and seller, DOC008 returns NULL for the invoice ID, and DOC009 completely misidentifies all three fields.

The ones listed above are those with issues (in some cases minor and in some cases completely wrong etc). Rest of the invoice entries are correct and not included in the above screen capture. The complete insert list is part of the code pack for this blog. Please note that what is considered partially correct, acceptable, or unacceptable is ultimately dependent on the specific use case and business requirements.

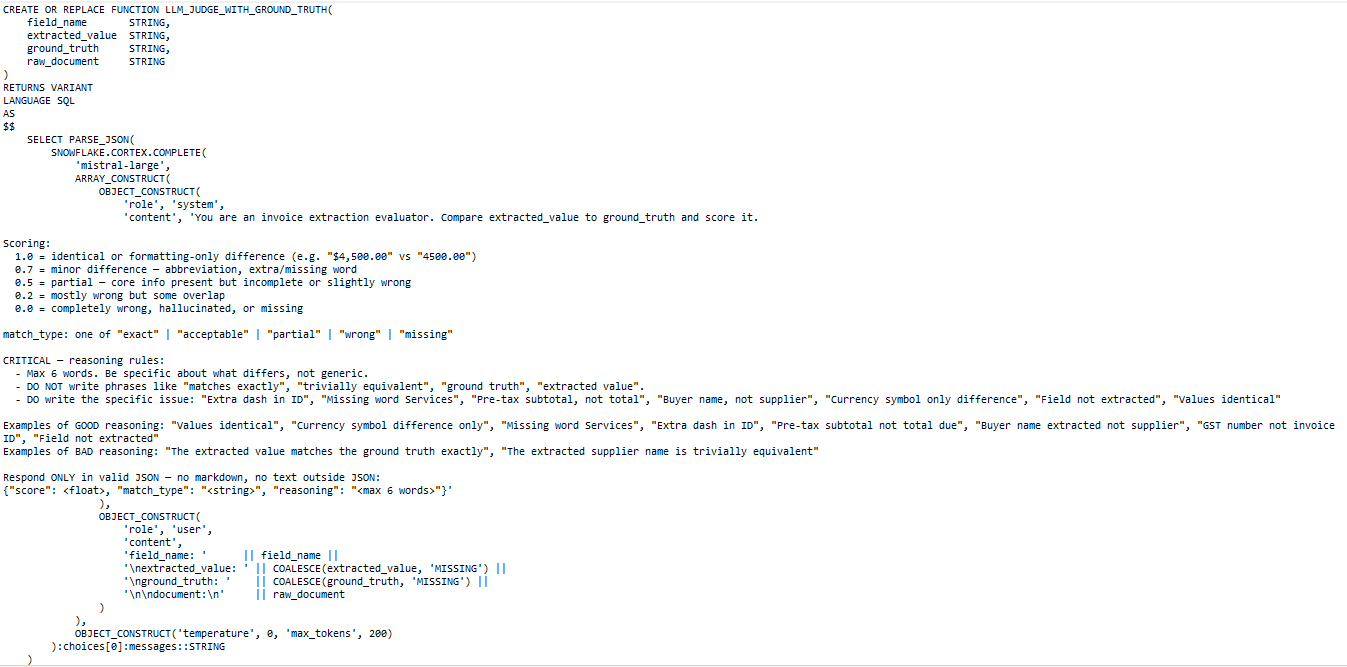

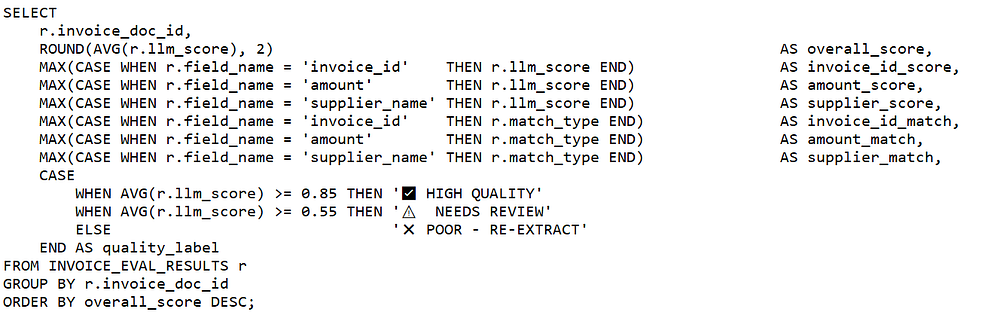

Step 4: Create the LLM-as-a-judge function

This is the heart of the pipeline. We create a Snowflake UDF (User-Defined Function) that calls Snowflake Cortex’s COMPLETE endpoint, effectively an API call to a hosted LLM, and asks it to evaluate one field at a time.

The function takes four arguments: the field name, the extracted value, the ground truth value, and the original document text for context. It returns a JSON object with a score, a match type label, and a one-sentence explanation.

Note that we pass the original document text as context so the judge can distinguish between an extraction that contradicts the document versus one that correctly extracted a value but formatted it differently from the ground truth.

Why temperature: 0?

Setting temperature to zero makes the model deterministic. It will produce the same score for the same input every time. This is critical for evaluation pipelines because you need reproducibility so that re-running the evaluation gives comparable results and you can track score changes over time.

Why PARSE_JSON?

Cortex returns the LLM’s reply as a raw string. PARSE_JSON converts it to a Snowflake VARIANT object, which lets you extract individual fields using colon notation (for example: eval_result:score::FLOAT). This makes aggregation in later steps straightforward.

Note: A common mistake is displaying the LLM’s raw reasoning string directly, as the output can be inconsistent. The reliable fix is to instead, derive clean labels entirely from the structured fields the LLM returns consistently: match_type, field_name, llm_score, and the extracted/ground truth values. These are categorical, so the LLM cannot pollute them with verbose language. Use the LLM’s score and match_type, both of which it gets right reliably. Basically, derive human-readable labels from your own SQL logic.

Step 5: Run the Evaluation

With the function in place, we now join the extractions and ground truth tables, unpivot the three fields into separate rows (so each field is evaluated independently), call the judge on each row, and insert results into INVOICE_EVAL_RESULTS.

(The complete SQL query for this step is too lengthy to include in-line. Please reference the full code in the GitHub repo, then read on).

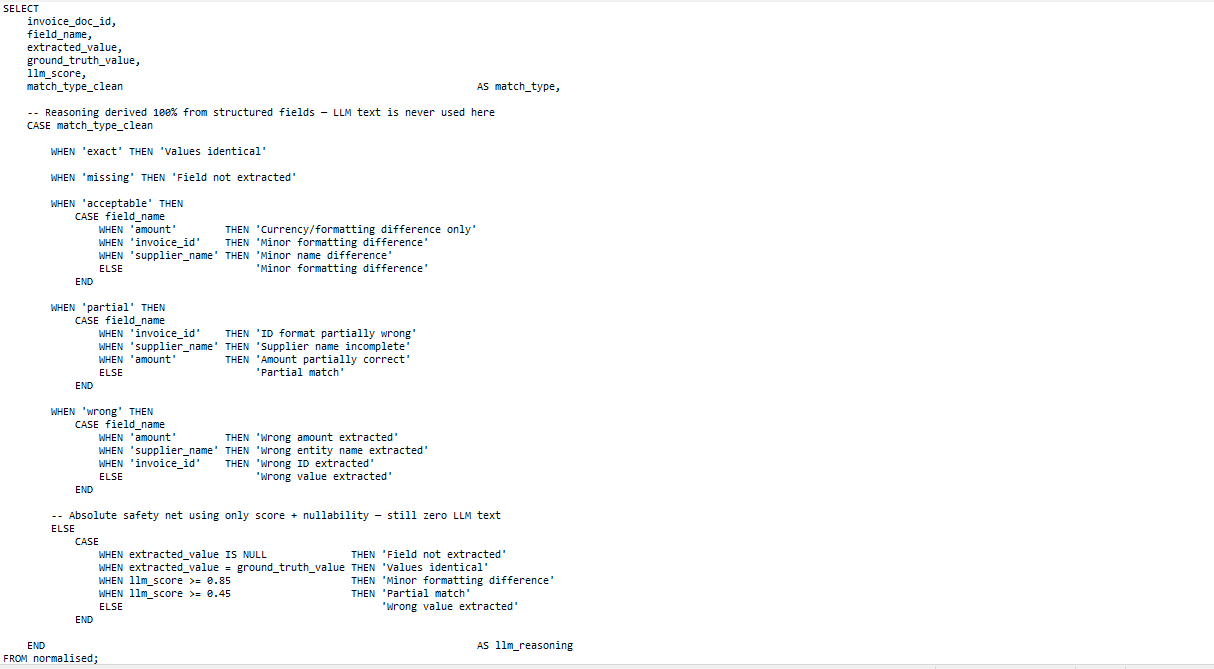

A note on the Reasoning Column: Why a normalised CTE instead of using the reasoning string?

The key architectural decision here is the normalised CTE. Rather than passing eval_result:reasoning::STRING through to the output, we extract only match_type and score from the LLM result and immediately normalize match_type by stripping embedded quotes and whitespace. From that point on, the raw LLM text is never referenced again.

This matters because LLMs are inconsistent with free-text fields even when given explicit instructions. The same input can return ‘Values identical’ in one run and ‘The extracted value is an exact match with the ground truth’ in the next. By deriving reasoning purely from match_type and field_name , both of which the LLM gets right reliably and your output labels are 100% consistent every time.

Understanding the UNION ALL Unpivot: Rather than sending all three fields to the judge in one call, we evaluate each field independently. This gives us granular scores per field, lets us identify exactly which field is failing most often in our pipeline, and keeps the judge prompt simple and focused.

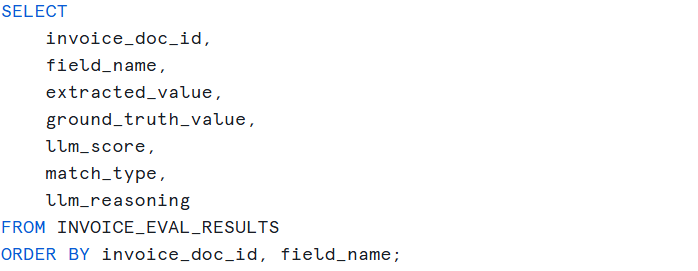

Step 6: Analyze the Raw Results

Start by looking at every individual field evaluation. This gives you the full picture before aggregating.

You should see output like this for our 10 synthetic invoices:

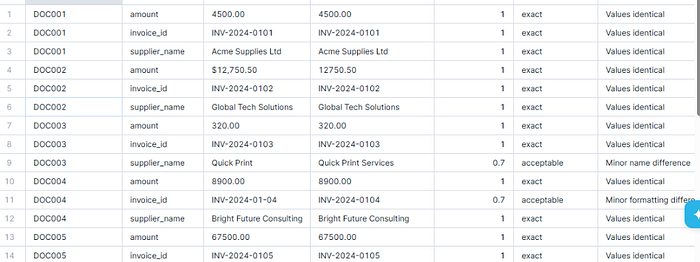

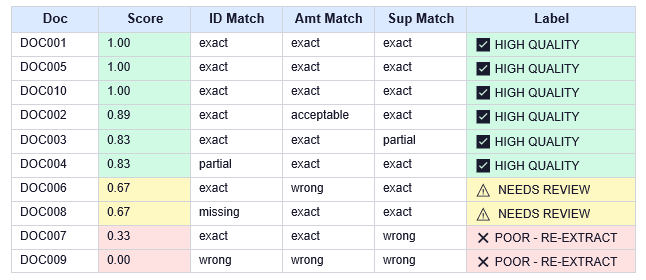

Step 7: Invoice Level Scorecard

Aggregate to a per-invoice quality label. This is the view your operations team would monitor daily.

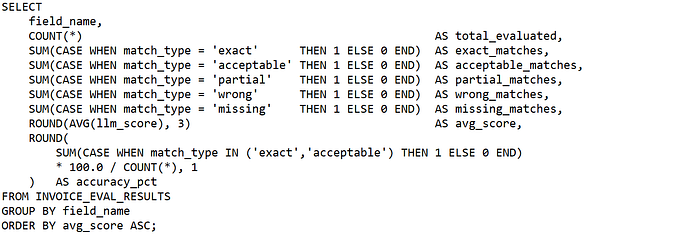

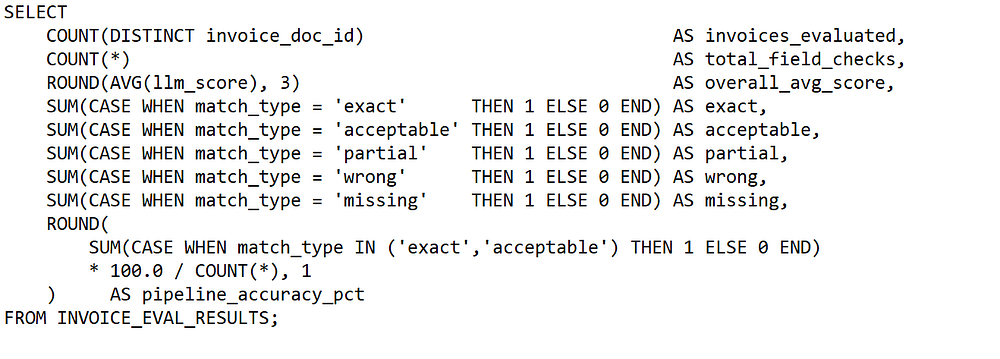

Step 8: Field-Level Accuracy Report

This is the diagnostic view for your ML / engineering team. It shows which field is causing the most extraction errors — essential for deciding where to invest improvement effort.

Results would look something like this:

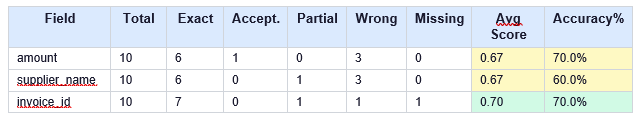

Step 9: Pipeline Summary Dashboard

One query to give you the top-line health of the entire extraction pipeline. This is what you would put in a dashboard or feed into an alerting system.

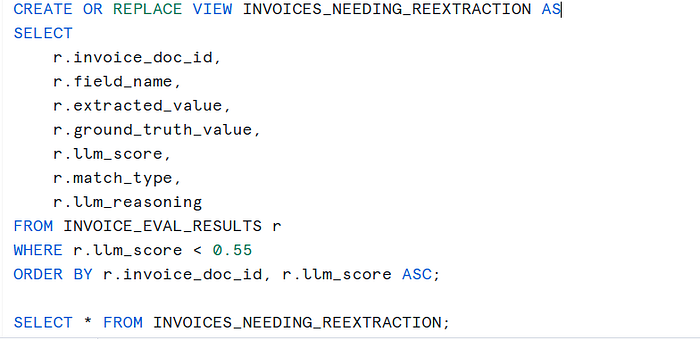

Step 10: Flag Records for Re-Extraction

The final step is actionability. Records scoring below your threshold automatically appear in a view that downstream processes can query to trigger re-extraction or manual review.

From our synthetic dataset, this view will surface DOC006 (amount), DOC007 (supplier), DOC008 (invoice_id), and all three fields of DOC009, exactly the records we designed to fail.

Note: For this use case we used mistral-large, which is strong at structured JSON output and available in most Snowflake regions. If your Snowflake account has access to claude-3–5-sonnet or llama3.1–70b-instruct, these are also excellent choices. Additionally, since INVOICE_EVAL_RESULTS has an eval_timestamp column, you can track accuracy week-over-week. A drop in accuracy after a prompt change or a new supplier onboarding is a signal to investigate immediately.

In summary, this workflow brings the entire AI extraction evaluation loop into a single, production-ready pipeline inside Snowflake. Starting from structured invoice extractions and human-verified ground truth, each field is independently evaluated using an LLM-as-a-Judge, producing deterministic scores and clean match types. The results flow directly into analysis, scorecards, and automated re-extraction queues, ensuring low-quality outputs are identified and acted on immediately. The outcome is a measurable, auditable, and scalable approach where extraction accuracy is continuously monitored, validated, and improved rather than assumed.

LLM-as-a-Judge is one of the most practical evaluation techniques available for document AI pipelines. By running it inside Snowflake Cortex, you keep everything in the warehouse . No external API calls, no data leaving your environment, no additional infrastructure to manage.

Few points to note:

Always use ground truth : without it you are checking plausibility, not accuracy

Evaluate fields independently : it reveals which specific extraction is failing

Use match types not just scores : they make the results actionable.

For example, score below 0.55 should automatically trigger re-extraction or human review.

Start with a small benchmark: Even 50 to 100 annotated records gives you a meaningful benchmark to improve against

The complete, runnable SQL script is available here. Copy it into a Snowflake worksheet and run the steps sequentially.

I share hands-on, implementation-focused perspectives on Generative & Agentic AI, LLMs, Snowflake and Cortex AI, translating advanced capabilities into practical, real-world analytics use cases. Do follow me on LinkedIn and Medium for more such insights.

Published via Towards AI