Summarize this content to 100 words:

Author(s): Sergey Andreychenko

Originally published on Towards AI.

Your CTO has root access to production. Your DevOps engineer can delete your database. Your security lead knows every password.

SOC2 won’t stop them. ISO27001 won’t stop them. Your $200K/year PAM solution won’t stop them either.

This isn’t paranoia. It’s architecture.

The Gap Nobody Fills

Compliance frameworks — SOC2, ISO27001 — have meaningfully raised the security baseline. But they verify systems and processes, not real-time actions by trusted users. That’s not a failure. It’s scope.

The tools we layer on top — PAM, Zero Trust, SIEM, session recording — share the same fundamental flaw: they operate after the fact. PAM controls who gets in, not what they do inside. SIEM flags anomalies from logs generated after the action. Session recording creates evidence for post-mortems. These are forensics, not prevention.

The numbers confirm the gap:

$4.99M — average cost of a malicious insider attack [1]

292 days — average time to detect a breach involving compromised credentials [1]

55% of organizations identify privileged users as their greatest security risk [2]

This isn’t theoretical. A former Cash App employee downloaded 8.2 million customer records after their employment ended — access was never revoked [3]. Block settled for $15M. In November 2025, a CrowdStrike insider was caught sharing screenshots of internal systems and SSO cookies with the ShinyHunters hacking group — reportedly for $25,000 [4]. CrowdStrike caught them before major damage, but the insider had already acted. Detection worked. Prevention didn’t.

Your most dangerous attack surface isn’t external. It’s the people you trust most, operating in the gap between authentication (which lets them in) and forensics (which catches them later).

The Architectural Shift

Here’s what I’ve been watching: engineers are moving their workflows into AI agents. And I think this changes the security equation in ways we haven’t fully recognized yet.

By end of 2025, 85% of developers regularly use AI tools for coding [5]. But it’s not just autocomplete anymore. Claude Code, Cursor, Codex — these are autonomous agents that understand entire repositories, execute multi-file changes, run shell commands, and manage infrastructure.

DevOps engineers run Kubernetes deployments through Claude Code. Platform teams manage Terraform configs through agentic IDEs. SREs debug production incidents by chatting with AI that has access to their observability stack.

The interface is shifting. From terminal to chat. From direct access to mediated access. From commands to conversations.

This creates something that didn’t exist before: a natural choke point. And I believe that choke point is a security opportunity hiding in plain sight.

The Thin Client Revenge

We’ve tried centralized access before. In the 90s and 2000s, thin clients promised everything: server-side execution, no local data, single point of control, centralized security.

It failed. Not because the security model was wrong — it was elegant. It failed because the UX was miserable. Latency killed productivity. Limited functionality frustrated users. Shadow IT exploded. The lesson seemed clear: you can’t force users into constrained interfaces for security’s sake.

But two things changed.

AI agents are genuinely better. Engineers aren’t being forced into Claude Code or Cursor. They’re choosing them because they’re more productive. The constraint doesn’t feel like a constraint — it feels like an upgrade.

Chat is where humans already live. The terminal is powerful, but unforgiving — a typo can be destructive, context doesn’t carry between sessions, and the learning curve keeps non-engineers out entirely. Chat is the opposite. WhatsApp, Telegram, Slack, Discord — people spend hours in chat interfaces daily. An AI agent in a chat isn’t asking anyone to learn something new. It’s taking the interface humans already prefer and making it capable of real work.

Maybe the enterprise security dream of “single point of control” was always achievable. It just needed to arrive as a productivity tool in a familiar form factor, not as a lockdown policy in an alien interface.

The Agent Architect: A New Security Role

If the chat interface becomes the single entry point to infrastructure, the critical question is: who designs what each agent can do?

I’ve been calling this role the Agent Architect — a person (or team) that defines agent capabilities the way a system administrator once defined permissions, but at a fundamentally different level of abstraction. Think less “who has access to what” and more “what can each agent do, and for whom.”

In practice, you’re creating virtual employees. Each agent gets a role, responsibilities, access scope, and constraints — the same things you’d define when hiring a person. The difference: a virtual employee doesn’t get tired, doesn’t hold grudges, doesn’t have a last day at the company — and every action it takes is logged. I wrote about treating AI systems like employees earlier [6] — the Agent Architect is the person who does the hiring.

“Image generated by the author using Leonardo AI”

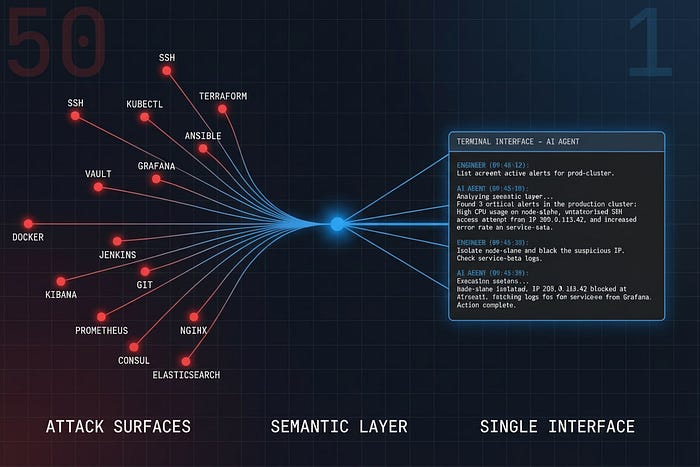

Today’s access model:

Employee → [credentials to 50 systems] → Infrastructure

Each person gets direct access. Each person needs monitoring. Each person is a potential threat vector.

The Agent Architect model:

Agent Architect → designs agents with specific capabilities↓DevOps Agent (can: deploy, scale, view logs)DBA Agent (can: query, backup — cannot: delete, export)Analyst Agent (can: read aggregated reports — cannot: access raw data)↓Employees → talk to their agent → Infrastructure

What I see here is capability-based security at the agent level. The DevOps engineer doesn’t have “access to Kubernetes.” Their agent has the capability to perform specific Kubernetes operations. If this works the way I think it does, the implications are significant:

Least privilege by design. The agent literally cannot do what it wasn’t given. Not “shouldn’t” — cannot.

One person with full access instead of fifty people with partial access and fuzzy boundaries.

Audit by default. Every interaction is a conversation. Conversations are logged.

Single moderation point. One semantic layer covering all agents, all roles.

Privacy-preserving by design. The moderation layer can evaluate and block without storing content. Chat histories can be ephemeral. The system needs to prevent, not surveil — a fundamentally different architecture from keyloggers and screen recordings. How much privacy vs. how much control is a market question, not a technical one. This architecture makes the tradeoff tunable for the first time.

An important clarification on “cannot”: the agent’s capabilities aren’t enforced by the LLM itself — that would be a prompt away from breaking. The agent calls strictly typed tools through something like MCP (Model Context Protocol) or predefined skill definitions, each with its own schema and permission scope. The LLM decides what to call; the tool layer decides whether the call is allowed. Prompt injection is a real attack vector, but even a successfully injected agent can only invoke tools that were explicitly registered for its role. This isn’t airtight — it’s defense in depth, not a magic wall — but it’s meaningfully different from “trust the LLM to say no.”

A reasonable objection: “Can we trust AI agents with infrastructure?” For standard operations — yes. Current models reliably execute typical infrastructure tasks. If the agent runs “create a server in AWS eu-west-1, t3.medium, with these security groups” through Terraform internally, it works. The abstraction level changes: you define outcomes, not atomic commands.

But the crucial distinction: let the agent execute your decisions, not make decisions for you. The agent is an executor, not a strategist. It doesn’t decide whether to scale down the cluster. You decide that. It executes the scaling. When something goes wrong, the accountability chain is explicit: a human made the decision, the agent carried it out.

To be clear — you can and should strategize with AI. An agent will surface tradeoffs your team wouldn’t consider under time pressure. But strategy and execution should be separate conversations. Think together, then execute through controlled channels. The danger isn’t AI helping you reason. It’s AI reasoning and acting in one uninterrupted flow where nobody stops to ask “wait, should we?”

There’s a subtlety here that matters in practice. Even a senior engineer with years of experience and deep pattern knowledge makes worse decisions when they’re tired, distracted, or context-switching between three incidents at once. Today, that engineer types a command and the system executes it. No questions asked. No second opinion. No “are you sure this is what you meant at 3am?”

A conversation from our team chat last week captures it well

Colleague: Did you see this? AI lost someone’s money lol. Moonwell lost $1.78M [7] because a dev trusted Claude-generated oracle code — cbETH got priced at $1.12 instead of $2,200. A basic test would’ve caught it.

Me: Yeah, but look what happened without AI the same week — a Bithumb employee typed “BTC” instead of “KRW” [8] in a promo payout field. Sent $40 billion in Bitcoin to 695 users instead of $1.37 each.

Colleague: …so it’s cheaper to lose money with AI than without it?

That’s the point. The question isn’t “does AI make mistakes?” It does. The question is what happens between the decision and the execution — and right now, for most infrastructure, the answer is: nothing. We’ve solved human unreliability with supervision, escalation, and feedback loops for centuries. We just forgot to apply the same patterns to AI-mediated workflows.

An agent in the loop changes this. Before executing, it can surface what it sees: “This will affect 12 production services. There’s an active deployment on two of them. Here’s an alternative approach that isolates the change. Do you want to proceed?” The decision is still yours. But now you’re making it with context laid out in front of you, not from memory while half-asleep. The agent doesn’t replace your judgment — it gives your judgment better inputs at the moment it matters most. Right now, most infrastructure access doesn’t work this way. It’s command → execute → hope for the best.

And the recursive part — you can create new tools with AI. Describe a custom deployment workflow. The agent builds the tooling. The tooling becomes a capability. The capability gets assigned to role-specific agents. This is why the Agent Architect role scales: you’re not hand-coding every integration, you’re describing capabilities.

Chat as Semantic Security Layer

When infrastructure access flows through a conversational interface, you get something architecturally unique: a layer that can understand intent, not just commands.

Traditional security pattern-matches on syntax: block rm -rf /, alert on SELECT * FROM users, flag large file transfers. A malicious insider doesn’t type obvious commands. They craft legitimate-looking queries that happen to extract sensitive data. They know the rules because they wrote them.

A semantic layer operates differently. The request hits a classification model that evaluates intent against a policy matrix. Context matters — who’s asking, what time, what they’ve been doing, what’s normal for this role. High confidence benign → execute. High confidence malicious → block. Low confidence → escalate or request clarification. Latency is milliseconds.

“Export all customer emails for the marketing analysis” → Approved, logged, proceeds. “Pull the user table filtered by signup date for the retention report” — legitimate on its own, but it’s the third such request this week, each with slightly different filters, and the cumulative result set covers 80% of the database. A syntax-based system sees three valid queries. A semantic layer sees a pattern.

No system catches everything. A sophisticated insider will eventually find the edges. But the difference between “unlimited direct access with post-hoc review” and “mediated access with real-time pattern detection” is the difference between a wide-open door and a door with a lock that can be picked. You still want the lock.

This isn’t science fiction. Content moderation systems have been doing semantic intent analysis at scale for years — the same architectures that catch harmful content phrased in endlessly creative ways work just as well for data exfiltration attempts. At Watchers, we moderate millions of messages across gaming, sports betting, and live platforms with accuracy above 95% — at speeds and costs that no human team with any process can match. Semantic analysis isn’t perfect, but it doesn’t need to be perfect. It needs to be better than the alternative, which right now is nothing.

The second mechanism is simpler but underrated: shared visibility. When infrastructure operations happen in a shared chat, colleagues see what each other are doing. “Hey, why are you pulling the full customer table at 2am?” This won’t scale to thousands of engineers. But the current trend is moving the other way — small, high-leverage teams doing more with fewer people. Telegram famously runs on ~30 engineers. The “one-man army” with AI tooling is becoming a real pattern in startups. For a team of 5–50 highly qualified engineers, shared visibility in a chat isn’t overhead — it’s a natural working environment where accountability happens as a side effect.

There’s a third, quieter mechanism: you can log every agent command — stripped of conversational context — into a separate audit stream. No chat history, no personal discussions, just a clean feed of actions: who requested what, when, what the agent executed. Enough to evaluate behavior patterns, measure operational quality, flag anomalies — without reading anyone’s messages. The privacy-preserving principle from earlier applies here too: you get the signal without the surveillance.

Not Another Layer — A Funnel

Think about how a DevOps engineer interacts with infrastructure today: SSH, kubectl, cloud console, CI/CD, database clients, Terraform, Ansible, monitoring dashboards, custom scripts, Slack bots — each one is an attack surface, each needs monitoring, each generates logs in different formats.

The insight — at least as I see it — isn’t “add AI monitoring everywhere.” It’s: consolidate first, then monitor.

When infrastructure access flows through a single conversational interface: 50 attack surfaces become 1. Fifty monitoring integrations become 1. Fifty log formats become 1 audit trail.

The AI agent becomes the interface to infrastructure. It’s not watching from outside — it’s the funnel through which everything flows. And engineers are already consolidating their workflows into AI agents. The funnel is forming naturally.

That consolidation cuts both ways: your single point of failure is now the same single point. If the agent goes down or gets compromised, you need a fallback. We keep break-glass SSH access for emergencies — but it’s disabled by default, audited separately, and requires two people to activate. The goal isn’t “only path,” it’s “default path with monitored exceptions.”

And to be clear about what’s behind the agents: it’s not AI inventing infrastructure from scratch. Under the hood it’s ArgoCD, Vault, managed databases, cloud-native backup systems — battle-tested industry standards with detailed documentation. The agent’s job is to follow that documentation and execute through those tools correctly. Current models handle this remarkably well, precisely because major cloud providers write thorough, structured docs. The AI isn’t replacing your infrastructure stack. It’s becoming a better interface to it.

What This Looks Like in Practice

We’re testing this model at Watchers right now. It’s early, but the results are promising enough that I want to share what we’re seeing.

One thing that mattered: before building any agents for the team, I spent a couple of months using AI tools myself for real infrastructure work — deployments, debugging, monitoring. Not to evaluate them theoretically, but to understand their behavior patterns firsthand. Where they’re reliable, where they hallucinate, where they need guardrails. Only after I had a feel for the edges did I design agents for others. The Agent Architect needs to understand the tool the way a manager needs to understand the job before delegating it.

Our infrastructure access happens through a shared chat where the tech team can give tasks to specialized agents and watch execution in real-time. Others can correct, comment, or escalate. Each agent has a different access level and audience — not everyone sees everything, and not everyone can talk to every agent.

Professor Yoda — trained on our documentation. Anyone in the company — sales, support, whoever — can ask how our product works. No infrastructure access, just knowledge.

Alert Einstein — helps the tech team set up monitoring alerts. Describe what you want to watch. Einstein creates the alert in Grafana, building queries from natural language. No need to know PromQL. But it’s not just for engineers — managers can create personal alerts for their own edge-case questions (“notify me if churn spikes above 5% on Tuesdays”) that go only to them. It’s safe because everything still runs through the same infrastructure, the same agent, the same permission model. No shadow dashboards, no rogue spreadsheets pulling from prod.

Deploy Depardieu — the serious one. Few people have access. Handles deployments, scaling, configuration changes. All significant operations require approval from 1–2 designated reviewers. The approval happens in the same chat. The tech team sees. When Alert Einstein publishes an incident, Depardieu picks it up immediately — it’s connected to our Grafana metrics, logs, and deployment history, so it’s not guessing from the alert text alone. It pulls actual data, surfaces possible causes, and proposes solutions. The tech team still makes the call (we remember: strategy and execution stay separate), but the time between “something broke” and “here are your options” shrinks from minutes of frantic investigation to seconds.

The next step we’re exploring: a risk matrix that lets Depardieu auto-apply low-risk fixes at 3am with one-click rollback, and wake someone for anything complex. Same confidence-threshold logic we use in content moderation, applied to infrastructure.

The pattern: request in shared chat (visible to tech team) → agent executes within its capabilities → destructive ops require approval → execution is logged and visible → the team learns.

Speed surprised us. What used to be “file a ticket, wait for DevOps, get it done tomorrow” is now “ask in chat, approval in 5 minutes, done.” The visibility didn’t slow us down — it sped us up because context was already shared.

We started this for productivity. The security properties emerged as a side effect. No one can quietly do something sketchy because the chat is right there.

The Obvious Objection

Yes, this only works if infrastructure access actually flows through the chat interface. If your CTO can still SSH directly into production, the semantic layer doesn’t help.

But look at the trajectory. Cloud consoles replaced direct server access. IaC replaced manual configuration. Approval workflows replaced yolo deployments. At each step, we traded direct access for something more transparent and controlled. Chat with AI agents is the same trade — not a new “interface to infrastructure,” but a better way to interact with the tools that already manage it. And unlike previous steps, engineers are adopting it voluntarily because it makes them faster.

Will this become the standard? I don’t know. But we’re running it, the security properties are real, and the productivity gains paid for the experiment before we even thought about security. That’s a bet I’m comfortable making.

If this resonates — just try it. Pick the most annoying recurring ticket your team handles, build an agent for it, put it in a shared channel. We started with documentation queries (Yoda), moved to alerting (Einstein), and only then touched deployments (Depardieu). Each step taught us what the next one should look like. You don’t need a strategy. You need one bot and one workflow. The rest will become obvious fast.

References

[1] IBM, “Cost of a Data Breach Report 2024,” IBM Security, 2024. [Online]. Available: https://www.ibm.com/reports/data-breach

[2] Cybersecurity Insiders, “Insider Threat Report 2024,” 2024. [Online]. Available: https://www.cybersecurity-insiders.com/

[3] L. Kolodny, “Block confirms Cash App data breach affecting 8.2 million US customers,” TechCrunch, Apr. 2022. [Online]. Available: https://techcrunch.com/2022/04/05/block-cash-app-data-breach/

[4] B. Toulas, “CrowdStrike catches insider feeding information to hackers,” BleepingComputer, Nov. 2025. [Online]. Available: https://www.bleepingcomputer.com/news/security/crowdstrike-catches-insider-feeding-information-to-hackers/

[5] JetBrains, “The State of Developer Ecosystem 2025,” JetBrains Research, Oct. 2025. [Online]. Available: https://blog.jetbrains.com/research/2025/10/state-of-developer-ecosystem-2025/

[6] S. Andreychenko, “Why We’re Deploying AI Wrong: Lessons from Managing People,” Medium, 2025. [Online]. Available: https://medium.com/@sandreychenko/why-were-deploying-ai-wrong-lessons-from-managing-people-f918b0878879

[7] Crypto.news, “Moonwell’s AI-coded oracle glitch misprices cbETH at $1, drains $1.78M,” Feb. 2026. [Online]. Available: https://crypto.news/moonwells-ai-coded-oracle-glitch-misprices-cbeth-at-1-drains-1-78m/

[8] CNBC, “South Korean crypto firm accidentally sends out $44 billion in Bitcoin,” Feb. 2026. [Online]. Available: https://www.cnbc.com/2026/02/07/south-korean-crypto-firm-accidentally-sends-out-44-billion-in-bitcoin.html

Published via Towards AI